C语言使用ffmpeg实现单线程异步的视频播放器

目录

- 前言

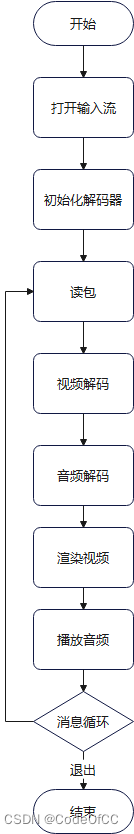

- 一、播放流程

- 二、关键实现

- 1.视频

- 2、音频

- 3、时钟同步

- 4、异步读包

- 三、完整代码

- 四、使用示例

- 总结

前言

ffplay是一个不错的播放器,是基于多线程实现的,播放视频时一般至少有4个线程:读包线程、视频解码线程、音频解码线程、视频渲染线程。如果需要多路播放时,线程不可避免的有点多,比如需要播放8路视频时则需要32个线程,这样对性能的消耗还是比较大的。于是想到用单线程实现一个播放器,经过实践发现是可行的,播放本地文件时可以做到完全单线程、播放网络流时需要一个线程实现读包异步。

一、播放流程

二、关键实现

因为是基于单线程的播放器有些细节还是要注意的。

1.视频

(1)解码

解码时需要注意设置多线程解码或者硬解以确保解码速度,因为在单线程中解码过慢则会导致视频卡顿。

//使用多线程解码

if (!av_dict_get(opts, "threads", NULL, 0))

av_dict_set(&opts, "threads", "auto", 0);

//打开解码器

if (avcodec_open2(decoder->codecContext, codec, &opts) < 0) {

LOG_ERROR("Could not open codec");

av_dict_free(&opts);

return ERRORCODE_DECODER_OPENFAILED;

}

或者根据情况设置硬解码器

codec = avcodec_find_decoder_by_name("hevc_qsv");

//打开解码器

if (avcodec_open2(decoder->codecContext, codec, &opts) < 0) {

LOG_ERROR("Could not open codec");

av_dict_free(&opts);

return ERRORCODE_DECODER_OPENFAILED;

}

2、音频

(1)修正时钟

虽然音频的播放是基于流的,时钟也可以按照播放的数据量计算,但是出现丢包或者定位的一些情况时,按照数据量累计的方式会导致时钟不正确,所以在解码后的数据放入播放队列时应该进行时钟修正。

synchronize_setClockTime参考《C语言 将音视频时钟同步封装成通用模块》。在音频解码之后:

//读取解码的音频帧 av_fifo_generic_read(play->audio.decoder.fifoFrame, &frame, sizeof(AVFrame*), NULL); //同步(修正)时钟 AVRational timebase = play->formatContext->streams[audio->decoder.streamIndex]->time_base; //当前帧的时间戳 double pts = (double)frame->pts * timebase.num / timebase.den; //减去播放队列剩余数据的时长就是当前的音频时钟 pts -= (double)av_audio_fifo_size(play->audio.playFifo) / play->audio.spec.freq; synchronize_setClockTime(&play->synchronize, &play->synchronize.audio, pts); //同步(修正)时钟--end //写入播放队列 av_audio_fifo_write(play->audio.playFifo, (void**)&data, samples);

3、时钟同步

需要时钟同步的地方有3处,一处是音频解码后即上面的2、(1)。另外两处则是音频播放和视频渲染的地方。

(1)音频播放

synchronize_updateAudio参考《c语言 将音视频时钟同步封装成通用模块》。

//sdl音频回调

static void audio_callback(void* userdata, uint8_t* stream, int len) {

Play* play = (Play*)userdata;

//需要写入的数据量

samples = play->audio.spec.samples;

//时钟同步,获取应该写入的数据量,如果是同步到音频,则需要写入的数据量始终等于应该写入的数据量。

samples = synchronize_updateAudio(&play->synchronize, samples, play->audio.spec.freq);

//略

}

(2)视频播放

在视频渲染处实现如下代码,其中synchronize_updateVideo参考《c语言 将音视频时钟同步封装成通用模块》。

//---------------时钟同步--------------

AVRational timebase = play->formatContext->streams[video->decoder.streamIndex]->time_base;

//计算视频帧的pts

double pts = frame->pts * (double)timebase.num / timebase.den;

//视频帧的持续时间

double duration = frame->pkt_duration * (double)timebase.num / timebase.den;

double delay = synchronize_updateVideo(&play->synchronize, pts, duration);

if (delay > 0)

//延时

{

play->wakeupTime = getCurrentTime() + delay;

return 0;

}

else if (delay < 0)

//丢帧

{

av_fifo_generic_read(video->decoder.fifoFrame, &frame, sizeof(AVFrame*), NULL);

av_frame_unref(frame);

av_frame_free(&frame);

return 0;

}

else

//播放

{

av_fifo_generic_read(video->decoder.fifoFrame, &frame, sizeof(AVFrame*), NULL);

}

//---------------时钟同步-------------- end

4、异步读包

如果是本地文件单线程播放是完全没有问题的。但是播放网络流时,由于av_read_frame不是异步的,网络状况差时会导致延时过高影响到其他部分功能的正常进行,所以只能是将读包的操作放到子线程执行,这里采用async、await的思想实现异步。

(1)async

将av_read_frame的放到线程池中执行。

//异步读取包,子线程中调用此方法

static int packet_readAsync(void* arg)

{

Play* play = (Play*)arg;

play->eofPacket = av_read_frame(play->formatContext, &play->packet);

//回到播放线程处理包

play_beginInvoke(play, packet_readAwait, play);

return 0;

}

(2)await

执行完成后通过消息队列通知播放器线程,将后续操作放在播放线程中执行

//异步读取包完成后的操作

static int packet_readAwait(void* arg)

{

Play* play = (Play*)arg;

if (play->eofPacket == 0)

{

if (play->packet.stream_index == play->video.decoder.streamIndex)

//写入视频包队

{

AVPacket* packet = av_packet_clone(&play->packet);

av_fifo_generic_write(play->video.decoder.fifoPacket, &packet, sizeof(AVPacket*), NULL);

}

else if (play->packet.stream_index == play->audio.decoder.streamIndex)

//写入音频包队

{

AVPacket* packet = av_packet_clone(&play->packet);

av_fifo_generic_write(play->audio.decoder.fifoPacket, &packet, sizeof(AVPacket*), NULL);

}

av_packet_unref(&play->packet);

}

else if (play->eofPacket == AVERROR_EOF)

{

play->eofPacket = 1;

//写入空包flush解码器中的缓存

AVPacket* packet = &play->packet;

if (play->audio.decoder.fifoPacket)

av_fifo_generic_write(play->audio.decoder.fifoPacket, &packet, sizeof(AVPacket*), NULL);

if (play->video.decoder.fifoPacket)

av_fifo_generic_write(play->video.decoder.fifoPacket, &packet, sizeof(AVPacket*), NULL);

}

else

{

LOG_ERROR("read packet erro!\n");

play->exitFlag = 1;

play->isAsyncReading = 0;

return ERRORCODE_PACKET_READFRAMEFAILED;

}

play->isAsyncReading = 0;

return 0;

}

(3)消息处理

在播放线程中调用如下方法,处理事件,当await方法抛入消息队列后,就可以通过消息循环获取await方法在播放线程中执行。

//事件处理

static void play_eventHandler(Play* play) {

PlayMessage msg;

while (messageQueue_poll(&play->mq, &msg)) {

switch (msg.type)

{

case PLAYMESSAGETYPE_INVOKE:

SDL_ThreadFunction fn = (SDL_ThreadFunction)msg.param1;

fn(msg.param2);

break;

}

}

}

三、完整代码

完整代码c和c++都可以运行,使用ffmpeg4.3、sdl2。

main.c/cpp

#include <stdio.h>

#include <stdint.h>

#include "SDL.h"

#include<stdint.h>

#include<string.h>

#ifdef __cplusplus

extern "C" {

#endif

#include "libavformat/avformat.h"

#include "libavcodec/avcodec.h"

#include "libswscale/swscale.h"

#include "libavutil/imgutils.h"

#include "libavutil/avutil.h"

#include "libavutil/time.h"

#include "libavutil/audio_fifo.h"

#include "libswresample/swresample.h"

#ifdef __cplusplus

}

#endif

/************************************************************************

* @Project: play

* @Decription: 视频播放器

* 这是一个播php放器,基于单线程实现的播放器。如果是播放本地文件可以做到完全单线程,播放网络流则读取包的时候是异步的,当然

* 主流程依然是单线程。目前是读取包始终异步,未作判断本地文件同步读包处理。

* @Verision: v0.0.0

* @Author: Xin Nie

* @Create: 2022/12/12 21:21:00

* @LastUpdate: 2022/12/12 21:21:00

************************************************************************

* Copyright @ 2022. All rights reserved.

************************************************************************/

/// <summary>

/// 消息队列

/// </summary>

typedef struct {

//队列长度

int _capacity;

//消息对象大小

int _elementSize;

//队列

AVFifoBuffer* _queue;

//互斥变量

SDL_mutex* _mtx;

//条件变量

SDL_cond* _cv;

}MessageQueue;

/// <summary>

/// 对象池

/// </summary>

typedef struct {

//对象缓存

void* buffer;

//对象大小

int elementSize;

//对象个数

int arraySize;

//对象使用状态1使用,0未使用

int* _arrayUseState;

//互斥变量

SDL_mutex* _mtx;

//条件变量

SDL_cond* _cv;

}OjectPool;

/// <summary>

/// 线程池

/// </summary>

typedef struct {

//最大线程数

int maxThreadCount;

//线程信息对象池

OjectPool _pool;

}ThreadPool;

/// <summary>

/// 线程信息

/// </summary>

typedef struct {

//所属线程池

ThreadPool* _threadPool;

//线程句柄

SDL_Thread* _thread;

//消息队列

MessageQueue _queue;

//线程回调方法

SDL_ThreadFunction _fn;

//线程回调参数

void* _arg;

}ThreadInfo;

//解码器

typedef struct {

//解码上下文

AVCodecContext* codecContext;

//解码器

const AVCodec* codec;

//解码临时帧

AVFrame* frame;

//包队列

AVFifoBuffer* fifoPacket;

//帧队列

AVFifoBuffer* fifoFrame;

//流下标

int streamIndex;

//解码结束标记

int eofFrame;

}Decoder;

/// <summary>

/// 时钟对象

/// </summary>

typedef struct {

//起始时间

double startTime;

//当前pts

double currentPts;

}Clock;

/// <summary>

/// 时钟同步类型

/// </summary>

typedef enum {

//同步到音频

SYNCHRONIZETYPE_AUDIO,

//同步到视频

SYNCHRONIZETYPE_VIDEO,

//同步到绝对时钟

SYNCHRONIZETYPE_ABSOLUTE

}SynchronizeType;

/// <summary>

/// 时钟同步对象

/// </summary>

typedef struct {

/// <summary>

/// 音频时钟

/// </summary>

Clock audio;

/// <summary>

/// 视频时钟

/// </summary>

Clock video;

/// <summary>

/// 绝对时钟

/// </summary>

Clock absolute;

/// <summary>

/// 时钟同步类型

/// </summary>

SynchronizeType type;

/// <summary>

/// 估算的视频帧时长

/// </summary>

double estimateVideoDuration;

/// <summary>

/// 估算视频帧数

/// </summary>

double n;

}Synchronize;

//视频模块

typedef struct {

//解码器

Decoder decoder;

//输出格式

enum AVPixelFormat forcePixelFormat;

//重采样对象

struct SwsContext* swsContext;

//重采样缓存

uint8_t* swsBuffer;

//渲染器

SDL_Renderer* sdlRenderer;

//纹理

SDL_Texture* sdlTexture;

//窗口

SDL_Window* screen;

//窗口宽

int screen_w;

//窗口高

int screen_h;

//旋转角度

double angle;

//播放结束标记

int eofDisplay;

//播放开始标记

int sofDisplay;

}Video;

//音频模块

typedef struct {

//解码器

Decoder decoder;

//输出格式

enum AVSampleFormat forceSampleFormat;

//音频设备id

SDL_AudioDeviceID audioId;

//期望的音频设备参数

SDL_AudIOSpec wantedSpec;

//实际的音频设备参数

SDL_AudioSpec spec;

//重采样对象

struct SwrContext* swrContext;

//重采样缓存

uint8_t* swrBuffer;

//播放队列

AVAudioFifo* playFifo;

//播放队列互斥锁

SDL_mutex* mutex;

//累积的待播放采样数

int accumulateSamples;

//音量

int volume;

//声音混合buffer

uint8_t* mixBuffer;

//播放结束标记

int eofPlay;

//播放开始标记

int sofPlay;

}Audio;

//播放器

typedef struct {

//视频url

char* url;

//解复用上下文

AVFormatContext* formatContext;

//包

AVPacket packet;

//是否正在读取包

int isAsyncReading;

//包读取结束标记

int eofPacket;

//视频模块

Video video;

//音频模块

Audio audio;

//时钟同步

Synchronize synchronize;

//延时结束时间

double wakeupTime;

//播放一帧

int step;

//是否暂停

int isPaused;

//是否循环

int isLoop;

//退出标记

int exitFlag;

//消息队列

MessageQueue mq;

}Play;

//播放消息类型

typedef enum {

//调用方法

PLAYMESSAGETYPE_INVOKE

}PlayMessageType;

//播放消息

typedef struct {

PlayMessageType type;

void* param1;

void* param2;

}PlayMessage;

//格式映射

static const struct TextureFormatEntry {

enum AVPixelFormat format;

int texture_fmt;

} sdl_texture_format_map[] = {

{ AV_PIX_FMT_RGB8, SDL_PIXELFORMAT_RGB332 },

{ AV_PIX_FMT_RGB444, SDL_PIXELFORMAT_RGB444 },

{ AV_PIX_FMT_RGB555, SDL_PIXELFORMAT_RGB555 },

{ AV_PIX_FMT_BGR555, SDL_PIXELFORMAT_BGR555 },

{ AV_PIX_FMT_RGB565, SDL_PIXELFORMAT_RGB565 },

{ AV_PIX_FMT_BGR565, SDL_PIXELFORMAT_BGR565 },

{ AV_PIX_FMT_RGB24, SDL_PIXELFORMAT_RGB24 },

{ AV_PIX_FMT_BGR24, SDL_PIXELFORMAT_BGR24 },

{ AV_PIX_FMT_0RGB32, SDL_PIXELFORMAT_RGB888 },

{ AV_PIX_FMT_0BGR32, SDL_PIXELFORMAT_BGR888 },

{ AV_PIX_FMT_NE(RGB0, 0BGR), SDL_PIXELFORMAT_RGBX8888 },

{ AV_PIX_FMT_NE(BGR0, 0RGB), SDL_PIXELFORMAT_BGRX8888 },

{ AV_PIX_FMT_RGB32, SDL_PIXELFORMAT_ARGB8888 },

{ AV_PIX_FMT_RGB32_1, SDL_PIXELFORMAT_RGBA8888 },

{ AV_PIX_FMT_BGR32, SDL_PIXELFORMAT_ABGR8888 },

{ AV_PIX_FMT_BGR32_1, SDL_PIXELFORMAT_BGRA8888 },

{ AV_PIX_FMT_YUV420P, SDL_PIXELFORMAT_IYUV },

{ AV_PIX_FMT_YUYV422, SDL_PIXELFORMAT_YUY2 },

{ AV_PIX_FMT_UYVY422, SDL_PIXELFORMAT_UYVY },

{ AV_PIX_FMT_NONE, SDL_PIXELFORMAT_UNKNOWN },

};

/// <summary>

/// 错误码

/// </summary>

typedef enum {

//无错误

ERRORCODE_NONE = 0,

//播放

ERRORCODE_PLAY_OPENINPUTSTREAMFAILED = -0xffff,//打开输入流失败

ERRORCODE_PLAY_VIDEOINITFAILED,//视频初始化失败

ERRORCODE_PLAY_AUDIOINITFAILED,//音频初始化失败

ERRORCODE_PLAY_LOOPERROR,//播放循环错误

ERRORCODE_PLAY_READPACKETERROR,//解包错误

ERRORCODE_PLAY_VIDEODECODEERROR,//视频解码错误

ERRORCODE_PLAY_AUDIODECODEERROR,//音频解码错误

ERRORCODE_PLAY_VIDEODISPLAYERROR,//视频播放错误

ERRORCODE_PLAY_AUDIOPLAYERROR,//音频播放错误

//解包

ERRORCODE_PACKET_CANNOTOPENINPUTSTREAM,//无法代码输入流

ERRORCODE_PACKET_CANNOTFINDSTREAMINFO,//查找不到流信息

ERRORCODE_PACKET_DIDNOTFINDDANYSTREAM,//找不到任何流

ERRORCODE_PACKET_READFRAMEFAILED,//读取包失败

//解码

ERRORCODE_DECODER_CANNOTALLOCATECONTEXT,//解码器上下文申请内存失败

ERRORCODE_DECODER_SETPARAMFAILED,//解码器上下文设置参数失败

ERRORCODE_DECODER_CANNOTFINDDECODER,//找不到解码器

ERRORCODE_DECODER_OPENFAILED,//打开解码器失败

ERRORCODE_DECODER_SENDPACKEDFAILED,//解码失败

ERRORCODE_DECODER_MISSINGASTREAMTODECODE,//缺少用于解码的流

//视频

ERRORCODE_VIDEO_DECODERINITFAILED,//音频解码器初始化失败

ERRORCODE_VIDEO_CANNOTGETSWSCONTEX,//无法获取ffmpeg swsContext

ERRORCODE_VIDEO_IMAGEFILLARRAYFAILED,//将图像数据映射到数组时失败:av_image_fill_arrays

ERRORCODE_VIDEO_CANNOTRESAMPLEAFRAME,//无法重采样视频帧

ERRORCODE_VIDEO_MISSINGSTREAM,//缺少视频流

//音频

ERRORCODE_AUDIO_DECODERINITFAILED,//音频解码器初始化失败

ERRORCODE_AUDIO_UNSUPORTDEVICESAMPLEFORMAT,//不支持音频设备采样格式

ERRORCODE_AUDIO_SAMPLESSIZEINVALID,//采样大小不合法

ERRORCODE_AUDIO_MISSINGSTREAM,//缺少音频流

ERRORCODE_AUDIO_SWRINITFAILED,//ffmpeg swr重采样对象初始化失败

ERRORCODE_AUDIO_CANNOTCONVERSAMPLE,//音频重采样失败

ERRORCODE_AUDIO_QUEUEISEMPTY,//队列数据为空

//帧

ERRORCODE_FRAME_ALLOCFAILED,//初始化帧失败

//队列

ERRORCODE_FIFO_ALLOCFAILED,//初始化队列失败

//sdl

ERRORCODE_SDL_INITFAILED,//sdl初始化失败

ERRORCODE_SDL_CANNOTCREATEMUTEX,//无法创建互斥锁

ERRORCODE_SDL_CANNOTOPENDEVICE, //无法打开音频设备

ERRORCODE_SDL_CREATEWINDOWFAILED,//创建窗口失败

ERRORCODE_SDL_CREATERENDERERFAILED,//创建渲染器失败

ERRORCODE_SDL_CREATETEXTUREFAILED,//创建纹理失败

//内存

ERRORCODE_MEMORY_ALLOCFAILED,//申请内存失败

ERRORCODE_MEMORY_LEAK,//内存泄漏

//参数

ERRORCODE_ARGUMENT_INVALID,//参数不合法

ERRORCODE_ARGUMENT_OUTOFRANGE,//超出范围

}ErrorCode;

/// <summary>

/// 日志等级

/// </summary>

typedef enum {

LOGLEVEL_NONE = 0,

LOGLEVEL_INFO = 1,

LOGLEVEL_DEBUG = 2,

LOGLEVEL_TRACE = 4,

LOGLEVEL_WARNNING = 8,

LOGLEVEL_ERROR = 16,

LOGLEVEL_ALL = LOGLEVEL_INFO | LOGLEVEL_DEBUG | LOGLEVEL_TRACE | LOGLEVEL_WARNNING | LOGLEVEL_ERROR

}

LogLevel;

//输出日志

#define LOGHELPERINTERNALLOG(message,level,...) aclog(__FILE__,__FUNCTION__,__LINE__,level,message,##__VA_ARGS__)

#define LOG_INFO(message,...) LOGHELPERINTERNALLOG(message,LOGLEVEL_INFO, ##__VA_ARGS__)

#define LOG_DEBUG(message,...) LOGHELPERINTERNALLOG(message,LOGLEVEL_DEBUG,##__VA_ARGS__)

#define LOG_TRACE(message,...) LOGHELPERINTERNALLOG(cmessage,LOGLEVEL_TRACE,##__VA_ARGS__)

#define LOG_WARNNING(message,...) LOGHELPERINTERNALLOG(message,LOGLEVEL_WARNNING,##__VA_ARGS__)

#define LOG_ERROR(message,...) LOGHELPERINTERNALLOG(message,LOGLEVEL_ERROR,##__VA_ARGS__)

static int logLevelFilter = LOGLEVEL_ALL;

static ThreadPool* _pool = NULL;

//写日志

void aclog(const char* fileName, const char* methodName, int line, LogLevel level, const char* message, ...) {

if ((logLevelFilter & level) == 0)

return;

char dateTime[32];

time_t tt = time(0);

struct tm* t;

va_list valist;

char buf[512];

char* pBuf = buf;

va_start(valist, message);

int size = vsnprintf(pBuf, sizeof(buf), message, valist);

if (size > sizeof(buf))

{

pBuf = (char*)av_malloc(size + 1);

vsnprintf(pBuf, size + 1, message, valist);

}

va_end(valist);

t = localtime(&tt);

sprintf(dateTime, "%04d-%02d-%02d %02d:%02d:%02d", t->tm_year + 1900, t->tm_mon + 1, t->tm_mday, t->tm_hour, t->tm_min, t->tm_sec);

//在此处可替换为写文件

printf("%s %d %d %s %s %d: %s\n", dateTime, level, SDL_ThreadID(), fileName, methodName, line, pBuf);

if (pBuf != buf)

av_free(pBuf);

}

//日志过滤,设为LOGLEVEL_NONE则不输出日志

void setLogFilter(LogLevel level) {

logLevelFilter = level;

}

//初始化消息队列

int messageQueue_init(MessageQueue* _this, int capacity, int elementSize) {

_this->_queue = av_fifo_alloc(elementSize * capacity);

if (!_this->_queue)

return ERRORCODE_MEMORY_ALLOCFAILED;

_this->_mtx = SDL_CreateMutex();

if (!_this->_mtx)

return ERRORCODE_MEMORY_ALLOCFAILED;

_this->_cv = SDL_CreateCond();

if (!_this->_cv)

return ERRORCODE_MEMORY_ALLOCFAILED;

_this->_capacity = capacity;

_this->_elementSize = elementSize;

return 0;

}

//反初始化消息队列

void messageQueue_deinit(MessageQueue* _this) {

if (_this->_queue)

av_fifo_free(_this->_queue);

if (_this->_cv)

SDL_DestroyCond(_this->_cv);

if (_this->_mtx)

SDL_DestroyMutex(_this->_mtx);

memset(_this, 0, sizeof(MessageQueue));

}

//推入消息

int messageQueue_push(MessageQueue* _this, void* msg) {

int ret = 0;

SDL_LockMutex(_this->_mtx);

ret = av_fifo_generic_write(_this->_queue, msg, _this->_elementSize, NULL);

SDL_CondSignal(_this->_cv);

SDL_UnlockMutex(_this->_mtx);

return ret > 0;

}

//轮询序消息

int messageQueue_poll(MessageQueue* _this, void* msg) {

SDL_LockMutex(_this->_mtx);

int size = av_fifo_size(_this->_queue);

if (size >= _this->_elementSize)

{

av_fifo_generic_read(_this->_queue, msg, _this->_elementSize, NULL);

}

SDL_UnlockMutex(_this->_mtx);

return size;

}

//等待消息

void messageQueue_wait(MessageQueue* _this, void* msg) {

SDL_LockMutex(_this->_mtx);

while (1) {

int size = av_fifo_size(_this->_queue);

if (size >= _this->_elementSize)

{

av_fifo_generic_read(_this->_queue, msg, _this->_elementSize, NULL);

break;

}

SDL_Condwait(_this->_cv, _this->_mtx);

}

SDL_UnlockMutex(_this->_mtx);

}

//初始化对象池

int ojectPool_init(OjectPool* _this, void* bufferArray, int elementSize, int arraySize)

{

if (elementSize < 1 || arraySize < 1)

return ERRORCODE_ARGUMENT_INVALID;

_this->buffer = (unsigned char*)bufferArray;

_this->elementSize = elementSize;

_this->arraySize = arraySize;

_this->_arrayUseState = (int*)av_mallocz(sizeof(int) * arraySize);

if (!_this->_arrayUseState)

return ERRORCODE_MEMORY_ALLOCFAILED;

_this->_mtx = SDL_CreateMutex();

if (!_this->_mtx)

return ERRORCODE_MEMORY_ALLOCFAILED;

_this->_cv = SDL_CreateCond();

if (!_this->_cv)

return ERRORCODE_MEMORY_ALLOCFAILED;

return 0;

}

//反初始化对象池

void objectPool_deinit(OjectPool* _this)

{

av_free(_this->_arrayUseState);

if (_this->_cv)

SDL_DestroyCond(_this->_cv);

if (_this->_mtx)

SDL_DestroyMutex(_this->_mtx);

memset(_this, 0, sizeof(OjectPool));

}

//取出对象

void* objectPool_take(OjectPool* _this, int timeout) {

void* element = NULL;

SDL_LockMutex(_this->_mtx);

while (1)

{

for (int i = 0; i < _this->arraySize; i++)

{

if (!_this->_arrayUseState[i])

{

element = &((Uint8*)_this->buffer)[i * _this->elementSize];

_this->_arrayUseState[i] = 1;

break;

}

}

if (!element)

{

if (timeout == -1)

{

int ret = SDL_CondWait(_this->_cv, _this->_mtx);

if (ret == -1)

{

LOG_ERROR("SDL_CondWait error");

break;

}

}

else

{

int ret = SDL_CondWaitTimeout(_this->_cv, _this->_mtx, timeout);

if (ret != 0)

{

if (ret == -1)

{

LOG_ERROR("SDL_CondWait error");

}

break;

}

}

}

else

{

break;

}

}

SDL_UnlockMutex(_this->_mtx);

return element;

}

//归还对象

void objectPool_restore(OjectPool* _this, void* element) {

SDL_LockMutex(_this->_mtx);

for (int i = 0; i < _this->arraySize; i++)

{

if (_this->_arrayUseState[i] && &((Uint8*)_this->buffer)[i * _this->elementSize] == element)

{

SDL_CondSignal(_this->_cv);

_this->_arrayUseState[i] = 0;

break;

}

}

SDL_UnlockMutex(_this->_mtx);

}

//初始化线程池

int threadPool_init(ThreadPool* _this, int maxThreadCount) {

_this->maxThreadCount = maxThreadCount;

return ojectPool_init(&_this->_pool, av_mallocz(sizeof(ThreadInfo) * maxThreadCount), sizeof(ThreadInfo), maxThreadCount);

}

//反初始化线程池

void threadPool_denit(ThreadPool* _this) {

ThreadInfo* threads = (ThreadInfo*)_this->_pool.buffer;

if (threads)

{

for (int i = 0; i < _this->maxThreadCount; i++)

{

int status;

if (threads[i]._thread)

{

int msg = 0;

messageQueue_push(&threads[i]._queue, &msg);

SDL_WaitThread(threads[i]._thread, &status);

messageQueue_deinit(&threads[i]._queue);

}

}

}

av_freep(&_this->_pool.buffer);

objectPool_deinit(&_this->_pool);

}

//线程池,线程处理过程

int threadPool_threadProc(void* data)

{

ThreadInfo* info = (ThreadInfo*)data;

int msg = 1;

while (msg) {

info->_fn(info->_arg);

objectPool_restore(&info->_threadPool->_pool, info);

messageQueue_wait(&info->_queue, &msg);

}

return 0;

}

//在线程池中运行方法

void threadPool_run(ThreadPool* _this, SDL_ThreadFunction fn, void* arg) {

ThreadInfo* info = (ThreadInfo*)objectPool_take(&_this->_pool, -1);

info->_fn = fn;

info->_arg = arg;

if (info->_thread)

{

int msg = 1;

messageQueue_push(&info->_queue, &msg);

}

else

{

info->_threadPool = _this;

messageQueue_init(&info->_queue, 1, sizeof(int));

info->_thread = SDL_CreateThread(threadPool_threadProc, "threadPool_threadProc", info);

}

}

//在播放线程中运行方法

void play_beginInvoke(Play* _this, SDL_ThreadFunction fn, void* arg)

{

PlayMessage msg;

msg.type = PLAYMESSAGETYPE_INVOKE;

msg.param1 = fn;

msg.param2 = arg;

messageQueue_push(&_this->mq, &msg);

}

//#include<chrono>

/// <summary>

/// 返回当前时间

/// </summary>

/// <returns>当前时间,单位秒,精度微秒</returns>

static double getCurrentTime()

{

//此处用的是ffmpeg的av_gettime_relative。如果没有ffmpeg环境,则可替换成平台获取时钟的方法:单位为秒,精度需要微妙,相对绝对时钟都可以。

return av_gettime_relative() / 1000000.0;

//return std::chrono::time_point_cast <std::chrono::nanoseconds>(std::chrono::high_resolution_clock::now()).time_since_epoch().count() / 1e+9;

}

/// <summary>

/// 重置时钟同步

/// 通常用于暂停、定位

/// </summary>

/// <param name="syn">时钟同步对象</param>

void synchronize_reset(Synchronize* syn) {

SynchronizeType type = syn->type;

memset(syn, 0, sizeof(Synchronize));

syn->type = type;

}

/// <summary>

/// 获取主时钟

/// </summary>

/// <param name="syn">时钟同步对象</param>

/// <returns>主时钟对象</returns>

Clock* synchronize_getMasterClock(Synchronize* syn) {

switch (syn->type)

{

case SYNCHRONIZETYPE_AUDIO:

return &syn->audio;

case SYNCHRONIZETYPE_VIDEO:

return &syn->video;

case SYNCHRONIZETYPE_ABSOLUTE:

return &syn->absolute;

default:

break;

}

return 0;

}

/// <summary>

/// 获取主时钟的时间

/// </summary>

/// <param name="syn">时钟同步对象</param>

/// <returns>时间,单位s</returns>

double synchronize_getMasterTime(Synchronize* syn) {

return getCurrentTime() - synchronize_getMasterClock(syn)->startTime;

}

/// <summary>

/// 设置时钟的时间

/// </summary>

/// <param name="syn">时钟同步对象</param>

/// <param name="pts">当前时间,单位s</param>

void synchronize_setClockTime(Synchronize* syn, Clock* clock, double pts)

{

clock->currentPts = pts;

clock->startTime = getCurrentTime() - pts;

}

/// <summary>

/// 获取时钟的时间

/// </summary>

/// <param name="syn">时钟同步对象</param>

/// <param name="clock">时钟对象</param>

/// <returns>时间,单位s</returns>

double synchronize_getClockTime(Synchronize* syn, Clock* clock)

{

return getCurrentTime() - clock->startTime;

}

/// <summary>

/// 更新视频时钟

/// </summary>

/// <param name="syn">时钟同步对象</param>

/// <param name="pts">视频帧pts,单位为s</param>

/// <param name="duration">视频帧时长,单位为s。缺省值为0,内部自动估算duration</param>

/// <returns>大于0则延时值为延时时长,等于0显示,小于0丢帧</returns>

double synchronize_updateVideo(Synchronize* syn, double pts, double duration)

{

if (duration == 0)

//估算duration

{

if (pts != syn->video.currentPts)

syn->estimateVideoDuration = (syn->estimateVideoDuration * syn->n + pts - syn->video.currentPts) / (double)(syn->n + 1);

duration = syn->estimateVideoDuration;

//只估算最新3帧

if (syn->n++ > 3)

syn->estimateVideoDuration = syn->n = 0;

if (duration == 0)

duration = 0.1;

}

if (syn->video.startTime == 0)

{

syn->video.startTime = getCurrentTime() - pts;

}

//以下变量时间单位为s

//当前时间

double currentTime = getCurrentTime() - syn->video.startTime;

//计算时间差,大于0则late,小于0则early。

double diff = currentTime - pts;

double sDiff = 0;

if (syn->type != SYNCHRONIZETYPE_VIDEO && synchronize_getMasterClock(syn)->startTime != 0)

//同步到主时钟

{

sDiff = syn->video.startTime - synchronize_getMasterClock(syn)->startTime;

diff += sDiff;

}

修正时间,时钟和视频帧偏差超过0.1s时重新设置起点时间。

//if (diff > 0.1)

//{

// syn->video.startTime = getCurrentTime() - pts;

// currentTime = pts;

// diff = 0;

//}

//时间早了延时

if (diff < -0.001)

{

if (diff < -0.1)

{

diff = -0.1;

}

//printf("video-time:%.3lfs audio-time:%.3lfs avDiff:%.4lfms early:%.4lfms \n", getCurrentTime() - syn->video.startTime, getCurrentTime() - syn->audio.startTime, sDiff * 1000, diff * 1000);

return -diff;

}

syn->video.currentPts = pts;

//时间晚了丢帧,duration为一帧的持续时间,在一个duration内是正常时间,加一个duration作为阈值来判断丢帧。

if (diff > 2 * duration)

{

//printf("time:%.3lfs avDiff %.4lfms late for:%.4lfms droped\n", pts, sDiff * 1000, diff * 1000);

return -1;

}

//更新视频时钟

printf("video-time:%.3lfs audio-time:%.3lfs absolute-time:%.3lfs synDiff:%.4lfms diff:%.4lfms \r", pts, getCurrentTime() - syn->audio.startTime, getCurrentTime() - syn->absolute.startTime, sDiff * 1000, diff * 1000);

syn->video.startTime = getCurrentTime() - pts;

if (syn->absolute.startTime == 0)

{

syn->absolute.startTime = syn->video.startTime;

}

return 0;

}

//double lastTime = 0;

/// <summary>

/// 更新音频时钟

/// </summary>

/// <param name="syn">时钟同步对象</param>

/// <param name="samples">采样数</param>

/// <param name="samplerate">采样率</param>

/// <returns>应该播放的采样数</returns>

int synchronize_updateAudio(Synchronize* syn, int samples, int samplerate) {

if (syn->type != SYNCHRONIZETYPE_AUDIO && synchronize_getMasterClock(syn)->startTime != 0)

{

//同步到主时钟

double audioTime = getCurrentTime() - syn->audio.startTime;

double diff = 0;

diff = synchronize_getMasterTime(syn) - audioTime;

int oldSamples = samples;

if (fabs(diff) > 0.01) {

samples += diff * samplerate;

}

if (samples < 0)

{

samples = 0;

}

if (samples > oldSamples * 2)

{

samples = oldSamples * 2;

}

}

syn->audio.currentPts += (double)samples / samplerate;

syn->audio.startTime = getCurrentTime() - syn->audio.currentPts;

if (syn->absolute.startTime == 0)

{

syn->absolute.startTime = syn->audio.startTime;

}

return samples;

}

/// <summary>

/// 更新音频时钟,通过数据长度

/// </summary>

/// <param name="syn">时钟同步对象</param>

/// <param name="bytesSize">数据长度</param>

/// <param name="samplerate">采样率</param>

/// <param name="channels">声道数</param>

/// <param name="bitsPerSample">位深</param>

/// <returns>应该播放的数据长度</returns>

int synchronize_updateAudioByBytesSize(Synchronize* syn, size_t bytesSize, int samplerate, int channels, int bitsPerSample) {

return synchronize_updateAudio(syn, bytesSize / (channels * bitsPerSample / 8), samplerate) * (bitsPerSample / 8) * channels;

}

//初始化解码器

static int decoder_init(Play* play, Decoder* decoder, int wantFifoPacketSize, int wantFifoFrameSize) {

//创建解码上下文

decoder->codecContext = avcodec_alloc_context3(NULL);

AVDictionary* opts = NULL;

if (decoder->codecContext == NULL)

{

LOG_ERROR("Could not allocate AVCodecContext");

return ERRORCODE_DECODER_CANNOTALLOCATECONTEXT;

}

//获取解码器

if (avcodec_parameters_to_context(decoder->codecContext, play->formatContext->streams[decoder->streamIndex]->codecpar) < 0)

{

LOG_ERROR("Could not init AVCodecContext");

return ERRORCODE_DECODER_SETPARAMFAILED;

}

AVCodec* codec = avcodec_find_decoder(decoder->codecContext->codec_id);

if (codec == NULL) {

LOG_ERROR("Codec not found");

return ERRORCODE_DECODER_CANNOTFINDDECODER;

}

//使用多线程解码

if (!av_dict_get(opts, "threads", NULL, 0))

av_dict_set(&opts, "threads", "auto", 0);

//打开解码器

if (avcodec_open2(decoder->codecContext, codec, &opts) < 0) {

LOG_ERROR("Could not open codec");

av_dict_free(&opts);

return ERRORCODE_DECODER_OPENFAILED;

}

av_dict_free(&opts);

//初始化临时帧

decoder->frame = av_frame_alloc();

if (!decoder->frame)

{

LOG_ERROR("Alloc avframe failed");

return ERRORCODE_FRAME_ALLOCFAILED;

}

//初始化包队列

decoder->fifoPacket = av_fifo_alloc(sizeof(AVPacket*) * wantFifoPacketSize);

if (!decoder->fifoPacket)

{

LOG_ERROR("alloc packet fifo failed");

return ERRORCODE_FIFO_ALLOCFAILED;

}

//初始化帧队列

decoder->fifoFrame = av_fifo_alloc(sizeof(AVFrame*) * wantFifoFrameSize);

if (!decoder->fifoFrame)

{

LOG_ERROR("alloc frame fifo failed");

return ERRORCODE_FIFO_ALLOCFAILED;

}

return 0;

}

//清空解码队列

static void decoder_clear(Play* play, Decoder* decoder) {

//清空包队列

if (decoder->fifoPacket)

{

while (av_fifo_size(decoder->fifoPacket) > 0)

{

AVPacket* packet;

av_fifo_generic_read(decoder->fifoPacket, &packet, sizeof(AVPacket*), NULL);

if (packet != &play->packet)

{

av_packet_unref(packet);

av_packet_free(&packet);

}

}

}

//清空帧队列

if (decoder->fifoFrame)

{

while (av_fifo_size(decoder->fifoFrame) > 0)

{

AVFrame* frame;

av_fifo_generic_read(decoder->fifoFrame, &frame, sizeof(AVFrame*), NULL);

av_frame_unref(frame);

av_frame_free(&frame);

}

}

//清空解码器缓存

if (decoder->codecContext)

{

avcodec_flush_buffers(decoder->codecContext);

}

}

//反初始化解码器

static void decoder_deinit(Play* play, Decoder* decoder)

{

decoder_clear(play, decoder);

if (decoder->codecContext)

{

avcodec_close(decoder->codecContext);

avcodec_free_context(&decoder->codecContext);

}

if (decoder->fifoPacket)

{

av_fifo_free(decoder->fifoPacket);

decoder->fifoPacket = NULL;

}

if (decoder->fifoFrame)

{

av_fifo_free(decoder->fifoFrame);

decoder->fifoFrame = NULL;

}

if (decoder->frame)

{

if (decoder->frame->format != -1)

{

av_frame_unref(decoder->frame);

}

av_frame_free(&decoder->frame);

}

decoder->eofFrame = 0;

}

//解码

static int decoder_decode(Play* play, Decoder* decoder) {

int ret = 0;

AVPacket* packet = NULL;

if (decoder->streamIndex == -1)

{

LOG_ERROR("Decoder missing a stream");

return ERRORCODE_DECODER_MISSINGASTREAMTODECODE;

}

if (av_fifo_space(decoder->fifoFrame) < 1)

//帧队列已满

{

goto end;

}

//接收上次解码的帧

while (avcodec_receive_frame(decoder->codecContext, decoder->frame) == 0) {

AVFrame* frame = av_frame_clone(decoder->frame);

av_frame_unref(decoder->frame);

av_fifo_generic_write(decoder->fifoFrame, &frame, sizeof(AVFrame*), NULL);

if (av_fifo_space(decoder->fifoFrame) < 1)

//帧队列已满

{

goto end;

}

}

if (av_fifo_size(decoder->fifoPacket) > 0)

//包队列有数据,开始解码

{

av_fifo_generic_read(decoder->fifoPacket, &packet, sizeof(AVPacket*), NULL);

//发送包

if (avcodec_send_packet(decoder->codecContext, packet) < 0)

{

LOG_ERROR("Decode error");

ret = ERRORCODE_DECODER_SENDPACKEDFAILED;

goto end;

}

//接收解码的帧

while (avcodec_receive_frame(decoder->codecContext, decoder->frame) == 0) {

AVFrame* frame = av_frame_clone(decoder->frame);

av_frame_unref(decoder->frame);

av_fifo_generic_write(decoder->fifoFrame, &frame, sizeof(AVFrame*), NULL);

if (av_fifo_space(decoder->fifoFrame) < 1)

//帧队列已满

{

goto end;

}

}

}

if (play->eofPacket)

{

decoder->eofFrame = 1;

}

end:

if (packet && packet != &play->packet)

{

av_packet_unref(packet);

av_packet_free(&packet);

}

return ret;

}

//音频设备播放回调

static void audio_callback(void* userdata, uint8_t* stream, int len) {

Play* play = (Play*)userdata;

int samples = 0;

//读取队列中的音频数据,由于AVAudioFifo非线程安全,且是子线程触发此回调,所以需要加锁

SDL_LockMutex(play->audio.mutex);

if (!play->isPaused)

{

if (av_audio_fifo_size(play->audio.playFifo) >= play->audio.spec.samples)

{

int drain = 0;

//需要写入的数据量

samples = play->audio.spec.samples;

//时钟同步,获取应该写入的数据量,如果是同步到音频,则需要写入的数据量始终等于应该写入的数据量。

samples = synchronize_updateAudio(&play->synchronize, samples, play->audio.spec.freq);

samples += play->audio.accumulateSamples;

if (samples > av_audio_fifo_size(play->audio.playFifo))

{

play->audio.accumulateSamples = samples - av_audio_fifo_size(play->audio.playFifo);

samples = av_audio_fifo_size(play->audio.playFifo);

}

else

{

play->audio.accumulateSamples = 0;

}

if (samples > play->audio.spec.samples)

//比需要写入的数据量大,则丢弃一部分

{

drain = samples - play->audio.spec.samples;

samples = play->audio.spec.samples;

}

if (play->audio.volume + SDL_MIX_MAXVOLUME != SDL_MIX_MAXVOLUME)

//改变音量

{

if (!play->audio.mixBuffer)

{

play->audio.mixBuffer = (uint8_t*)av_malloc(len);

if (!play->audio.mixBuffer)

{

LOG_ERROR("mixBuffer alloc failed");

return;

}

}

av_audio_fifo_read(play->audio.playFifo, (void**)&play->audio.mixBuffer, samples);

int len2 = av_samples_get_buffer_size(0, play->audio.spec.channels, samples, play->audio.forceSampleFormat, 1);

memset(stream, 0, len2);

SDL_MixAudioFormat(stream, play->audio.mixBuffer, play->audio.spec.format, len2, play->audio.volume + SDL_MIX_MAXVOLUME);

}

else

//直接写入

{

av_audio_fifo_read(play->audio.playFifo, (void**)&stream, samples);

}

av_audio_fifo_drain(play->audio.playFifo, drain);

}

}

SDL_UnlockMutex(play->audio.mutex);

//补充静音数据

int fillSize = av_samples_get_buffer_size(0, play->audio.spec.channels, samples, play->audio.forceSampleFormat, 1);

if (fillSize < 0)

fillSize = 0;

if (len - fillSize > 0)

{

memset(stream + fillSize, 0, len - fillSize);

}

}

//初始化音频模块

static int audio_init(Play* play, Audio* audio) {

//初始化解码器

if (decoder_init(play, &audio->decoder, 600, 100) != 0)

{

LOG_ERROR("audio decoder init error");

return ERRORCODE_AUDIO_DECODERINITFAILED;

}

//初始化sdl

if ((SDL_WasInit(0) & (SDL_INIT_VIDEO | SDL_INIT_AUDIO | SDL_INIT_TIMER)) == 0)

{

if (SDL_Init(SDL_INIT_VIDEO | SDL_INIT_AUDIO | SDL_INIT_TIMER)) {

LOG_ERROR("Could not initialize SDL - %s", SDL_GetError());

return ERRORCODE_SDL_INITFAILED;

}

}

//打开音频设备

audio->wantedSpec.channels = av_get_channel_layout_nb_channels(audio->decoder.codecContext->channel_layout);

audio->wantedSpec.freq = audio->decoder.codecContext->sample_rate;

audio->wantedSpec.format = AUDIO_F32SYS;

audio->wantedSpec.silence = 0;

audio->wantedSpec.samples = FFMAX(512, 2 << av_log2(audio->wantedSpec.freq / 30));

audio->wantedSpec.callback = audio_callback;

audio->wantedSpec.userdata = play;

audio->audioId = SDL_OpenAudioDevice(NULL, 0, &audio->wantedSpec, &audio->spec, SDL_AUDIO_ALLOW_ANY_CHANGE);

if (audio->audioId < 2)

{

LOG_ERROR("Open audio device error");

return ERRORCODE_SDL_CANNOTOPENDEVICE;

}

//匹配音频格式

switch (audio->spec.format)

{

case AUDIO_S16SYS:

audio->forceSampleFormat = AV_SAMPLE_FMT_S16;

break;

case AUDIO_S32SYS:

audio->forceSampleFormat = AV_SAMPLE_FMT_S32;

break;

case AUDIO_F32SYS:

audio->forceSampleFormat = AV_SAMPLE_FMT_FLT;

break;

default:

LOG_ERROR("audio device format was not surported %d", (int)audio->spec.format);

return ERRORCODE_AUDIO_UNSUPORTDEVICESAMPLEFORMAT;

}

//初始化音频帧队列的互斥锁

audio->mutex = SDL_CreateMutex();

if (!audio->mutex)

{

LOG_ERROR("alloc mutex failed");

return ERRORCODE_SDL_CANNOTCREATEMUTEX;

}

//音频播放队列

audio->playFifo = av_audio_fifo_alloc(audio->forceSampleFormat, audio->spec.channels, audio->spec.samples * 30);

if (!audio->playFifo)

{

LOG_ERROR("alloc audio fifo failed");

return ERRORCODE_FIFO_ALLOCFAILED;

}

//设备开启播放

SDL_PauseAudioDevice(audio->audioId, 0);

return 0;

}

//音频初始化

static void audio_deinit(Play* play, Audio* audio)

{

if (audio->audioId >= 2)

{

SDL_PauseAudioDevice(audio->audioId, 1);

SDL_CloseAudioDevice(audio->audioId);

audio->audioId = 0;

}

if (audio->mutex)

{

SDL_DestroyMutex(audio->mutex);

audio->mutex = NULL;

}

if (play->audio.playFifo)

{

av_audio_fifo_free(play->audio.playFifo);

play->audio.playFifo = NULL;

}

if (audio->swrContext)

{

swr_free(&audio->swrContext);

}

if (audio->swrBuffer)

{

av_freep(&audio->swrBuffer);

}

if (audio->mixBuffer)

{

av_freep(&audio->mixBuffer);

}

decoder_deinit(play, &audio->decoder);

audio->eofPlay = 0;

audio->sofPlay = 0;

}

//音频播放

static int audio_play(Play* play, Audio* audio) {

if (audio->decoder.streamIndex == -1)

{

LOG_ERROR("audio play missing audio stream");

//没有音频流

return ERRORCODE_AUDIO_MISSINGSTREAM;

}

if (play->video.decoder.streamIndex != -1 && !play->video.sofDisplay)

{

return 0;

}

while (av_fifo_size(play->audio.decoder.fifoFrame) > 0)

{

AVFrame* frame = NULL;

uint8_t* data = NULL;

int dataSize = 0;

int samples = 0;

av_fifo_generic_peek(play->audio.decoder.fifoFrame, &frame, sizeof(AVFrame*), NULL);

if (play->audio.forceSampleFormat != play->audio.decoder.codecContext->sample_fmt || play->audio.spec.freq != frame->sample_rate || play->audio.spec.channels != frame->channels)

//重采样

{

//计算输入采样数

int out_count = (int64_t)frame->nb_samples * play->audio.spec.freq / frame->sample_rate + 256;

//计算输出数据大小

int out_size = av_samples_get_buffer_size(NULL, play->audio.spec.channels, out_count, play->audio.forceSampleFormat, 0);

//输入数据指针

const uint8_t** in = (const uint8_t**)frame->extended_data;

//输出缓冲区指针

uint8_t** out = &play->audio.swrBuffer;

int len2 = 0;

if (out_size < 0) {

LOG_ERROR("sample output jssize value %d was invalid", out_size);

return ERRORCODE_AUDIO_SAMPLESSIZEINVALID;

}

if (!play->audio.swrContext)

//初始化重采样对象

{

play->audio.swrContext = swr_alloc_set_opts(NULL, av_get_default_channel_layout(play->audio.spec.channels), play->audio.forceSampleFormat, play->audio.spec.freq, play->audio.decoder.codecContext->channel_layout, play->audio.decoder.codecContext->sample_fmt, play->audio.decoder.codecContext->sample_rate, 0, NULL);

if (!play->audio.swrContext || swr_init(play->audio.swrContext) < 0) {

LOG_ERROR("swr_alloc_set_opts or swr_init failed");

return ERRORCODE_AUDIO_SWRINITFAILED;

}

}

if (!play->audio.swrBuffer)

//申请输出缓冲区

{

play->audio.swrBuffer = (uint8_t*)av_mallocz(out_size);

if (!play->audio.swrBuffer)

{

LOG_ERROR("audio swr ouput buffer alloc failed");

return ERRORCODE_MEMORY_ALLOCFAILED;

}

}

//执行重采样

len2 = swr_convert(play->audio.swrContext, out, out_count, in, frame->nb_samples);

if (len2 < 0) {

LOG_ERROR("swr_convert failed");

return ERRORCODE_AUDIO_CANNOTCONVERSAMPLE;

}

//取得输出数据

data = play->audio.swrBuffer;

//输出数据长度

dataSize = av_samples_get_buffer_size(0, play->audio.spec.channels, len2, play->audio.forceSampleFormat, 1);

samples = len2;

}

else

//无需重采样

{

data = frame->data[0];

dataSize = av_samples_get_buffer_size(frame->linesize, frame->channels, frame->nb_samples, play->audio.forceSampleFormat, 0);

samples = frame->nb_samples;

}

if (dataSize < 0)

{

LOG_ERROR("sample data size value %d was invalid", dataSize);

return ERRORCODE_AUDIO_SAMPLESSIZEINVALID;

}

//写入播放队列

SDL_LockMutex(play->audio.mutex);

if (av_audio_fifo_space(play->audio.playFifo) >= samples)

{

//同步(修正)时钟

AVRational timebase = play->formatContext->streams[audio->decoder.streamIndex]->time_base;

//当前帧的时间戳

double pts = (double)frame->pts * timebase.num / timebase.den;

//减去播放队列剩余数据的时长就是当前的音频时钟

pts -= (double)av_audio_fifo_size(play->audio.playFifo) / play->audio.spec.freq;

//设置音频时钟

synchronize_setClockTime(&play->synchronize, &play->synchronize.audio, pts);

//同步(修正)时钟--end

//写入播放队列

av_audio_fifo_write(play->audio.playFifo, (void**)&data, samples);

//解码队列的帧出队

av_fifo_generic_read(play->audio.decoder.fifoFrame, &frame, sizeof(AVFrame*), NULL);

av_frame_unref(frame);

av_frame_free(&frame);

if (!audio->sofPlay)

//标记开始

{

audio->sofPlay = 1;

}

}

else

{

SDL_UnlockMutex(play->audio.mutex);

break;

}

SDL_UnlockMutex(play->audio.mutex);

}

//计算睡眠延时

SDL_LockMutex(play->audio.mutex);

double canSleepTime = (double)av_audio_fifo_size(play->audio.playFifo) / play->audio.spec.freq;

double wakeupTime = getCurrentTime() + canSleepTime;

if (play->video.decoder.streamIndex == -1 || wakeupTime < play->wakeupTime)

{

play->wakeupTime = wakeupTime;

}

SDL_UnlockMutex(play->audio.mutex);

if (av_fifo_size(play->audio.decoder.fifoFrame) < 1 && audio->decoder.eofFrame)

//标记结束

{

audio->eofPlay = 1;

}

return 0;

}

//初始化视频模块

static int video_init(Play* play, Video* video) {

//初始化解码器

if (decoder_init(play, &video->decoder, 600, 1) != 0)

{

LOG_ERROR("video decoder init error");

return ERRORCODE_VIDEO_DECODERINITFAILED;

}

//初始化sdl

if ((SDL_WasInit(0) & (SDL_INIT_VIDEO | SDL_INIT_AUDIO | SDL_INIT_TIMER)) == 0)

{

if (SDL_Init(SDL_INIT_VIDEO | SDL_INIT_AUDIO | SDL_INIT_TIMER)) {

LOG_ERROR("Could not initialize SDL - %s", SDL_GetError());

return ERRORCODE_SDL_INITFAILED;

}

}

return 0;

}

//反初始化视频模块

static void video_deinit(Play* play, Video* video) {

if (video->swsContext)

{

sws_freeContext(video->swsContext);

video->swsContext = NULL;

}

if (video->swsBuffer)

{

av_free(video->swsBuffer);

video->swsBuffer = NULL;

}

if (video->sdlTexture)

{

SDL_DestroyTexture(video->sdlTexture);

video->sdlTexture = NULL;

}

if (video->sdlRenderer)

{

SDL_DestroyRenderer(video->sdlRenderer);

video->sdlRenderer = NULL;

}

if (video->screen)

{

SDL_DestroyWindow(video->screen);

video->screen = NULL;

}

decoder_deinit(play, &video->decoder);

video->eofDisplay = 0;

video->sofDisplay = 0;

}

double get_rotation(AVStream* st)

{

AVDictionaryEntry* rotate_tag = av_dict_get(st->metadata, "rotate", NULL, 0);

double theta = 0;

if (rotate_tag && *rotate_tag->value && strcmp(rotate_tag->value, "0")) {

theta = atof(rotate_tag->value);

}

theta -= 360 * floor(theta / 360 + 0.9 / 360);

if (fabs(theta - 90 * round(theta / 90)) >http://www.devze.com; 2)

{

LOG_INFO("Odd rotation angle");

}

return theta;

}

/// <summary>

/// 计算旋转的矩形大小

/// </summary>

/// <param name="src">原图像区域</param>

/// <param name="dst">目标区域</param>

/// <param name="angle">旋转角度</param>

/// <returns></returns>

static SDL_Rect getRotateRect(SDL_Rect *srcRect, SDL_Rect* dstRect,double angle) {

SDL_Rect targetRect;

const double PI = 3.1415926535897935384626;

double theta = PI / 180.0 * angle;

//计算旋转后的边框大小

int width = srcRect->h * fabs(sin(theta) )+ srcRect->w * fabs(cos(theta)) + 0.5;

int height = srcRect->h * fabs(cos(theta)) + srcRect->w * fabs(sin(theta)) + 0.5;

double srcRatio = (double)srcRect->w / srcRect->h;

double srcBorderRatio = (double)width / height;

double dstRatio = (double)dstRect->w / dstRect->h;

//计算边框缩放到目标区域的大小

int zoomWidth;

int zoomHeight;

if (srcBorderRatio > dstRatio)

{

zoomWidth = dstRect->w;

zoomHeight = dstRect->w / srcBorderRatio;

}

else

{

zoomWidth = dstRect->h * srcBorderRatio;

zoomHeight = dstRect->h;

}

//通过缩放后的边框计算还原的图像大小

targetRect.h = (double)zoomWidth / (fabs(sin(theta) )+ srcRatio * fabs(cos(theta)));

targetRect.w = targetRect.h * srcRatio;

targetRect.x = (dstRect->w- targetRect.w ) / 2;

targetRect.y = (dstRect->h- targetRect.h ) / 2;

return targetRect;

}

//渲染到窗口

static int video_present(Play* play, Video* video, AVFrame* frame)

{

SDL_Rect sdlRect;

SDL_Rect sdlRect2;

uint8_t* dst_data[4];

int dst_linesize[4];

if (!video->screen)

{

//创建窗口

video->screen = SDL_CreateWindow("video play window", SDL_WINDOwpOS_UNDEFINED, SDL_WINDOWPOS_UNDEFINED,

video->screen_w, video->screen_h,

SDL_WINDOW_OPENGL);

if (!video->screen) {

LOG_ERROR("SDL: could not create window - exiting:%s\n", SDL_GetError());

return ERRORCODE_SDL_CREATEWINDOWFAILED;

}

}

if (!video->sdlRenderer)

//初始化sdl纹理

{

video->angle = get_rotation(play->formatContext->streams[play->video.decoder.streamIndex]);

video->sdlRenderer = SDL_CreateRenderer(video->screen, -1, 0);

if (!video->sdlRenderer)

{

LOG_ERROR("Create sdl renderer error");

return ERRORCODE_SDL_CREATERENDERERFAILED;

}

//获取合适的像素格式

struct TextureFormatEntry format;

format.format = AV_PIX_FMT_YUV420P;

format.texture_fmt = SDL_PIXELFORMAT_IYUV;

for (int i = 0; i < sizeof(sdl_texture_format_map) / sizeof(struct TextureFormatEntry); i++)

{

if (sdl_texture_format_map[i].format == video->decoder.codecContext->pix_fmt)

{

format = sdl_texture_format_map[i];

break;

}

}

video->forcePixelFormat = format.format;

//创建和视频大小一样的纹理

video->sdlTexture = SDL_CreateTexture(video->sdlRenderer, format.texture_fmt, SDL_TEXTUREAccess_STREAMING, video->decoder.codecContext->width, video->decoder.codecContext->height);

if (!video->sdlTexture)

{

LOG_ERROR("Create sdl texture error");

return ERRORCODE_SDL_CREATETEXTUREFAILED;

}

}

if (video->forcePixelFormat != video->decoder.codecContext->pix_fmt)

//重采样-格式转换

{

video->swsContext = sws_getCachedContext(video->swsContext, video->decoder.codecContext->width, video->decoder.codecContext->height, video->decoder.codecContext->pix_fmt, video->decoder.codecContext->width, video->decoder.codecContext->height, video->forcePixelFormat, SWS_FAST_BILINEAR, NULL, NULL, NULL);

if (!video->swsContext)

{

LOG_ERROR("sws_getCachedContext failed");

return ERRORCODE_VIDEO_CANNOTGETSWSCONTEX;

}

if (!video->swsBuffer)

{

video->swsBuffer = (uint8_t*)av_malloc(av_image_get_buffer_size(video->forcePixelFormat, video->decoder.codecContext->width, video->decoder.codecContext->height, 64));

if (video->swsBuffer)

{

LOG_ERROR("audio swr ouput buffer alloc failed");

return ERRORCODE_MEMORY_ALLOCFAILED;

}

}

if (av_image_fill_arrays(dst_data, dst_linesize, video->swsBuffer, video->forcePixelFormat, video->decoder.codecContext->width, video->decoder.codecContext->height, 1) < 0)

{

LOG_ERROR("sws_getCachedContext failed");

return ERRORCODE_VIDEO_IMAGEFILLARRAYFAILED;

}

if (sws_scale(video->swsContext, frame->data, frame->linesize, 0, frame->height, dst_data, dst_linesize) < 0)

{

LOG_ERROR("Call sws_scale error");

return ERRORCODE_VIDEO_CANNOTRESAMPLEAFRAME;

}

}

else

//无需重采样

{

memcpy(dst_data, frame->data, sizeof(uint8_t*) * 4);

memcpy(dst_linesize, frame->linesize, sizeof(int) * 4);

}

//窗口区域

sdlRect.x = 0;

sdlRect.y = 0;

sdlRect.w = video->screen_w;

sdlRect.h = video->screen_h;

//视频区域

sdlRect2.x = 0;

sdlRect2.y = 0;

sdlRect2.w = video->decoder.codecContext->width;

sdlRect2.h = video->decoder.codecContext->height;

//渲染到sdl窗口

SDL_RenderClear(video->sdlRenderer);

SDL_UpdateYUVTexture(video->sdlTexture, &sdlRect2, dst_data[0], dst_linesize[0], dst_data[1], dst_linesize[1], dst_data[2], dst_linesize[2]);

if (video->angle == 0)

SDL_RenderCopy(video->sdlRenderer, video->sdlTexture, NULL, &sdlRect);

else

//旋转视频

{

SDL_Rect sdlRect3;

sdlRect3= getRotateRect(&sdlRect2,&sdlRect,video->angle);

SDL_RenderCopyEx(video->sdlRenderer, video->sdlTexture, NULL

, &sdlRect3, video->angle, 0, SDL_FLIP_NONE);

}

SDL_RenderPresent(video->sdlRenderer);

}

//视频显示

static int video_display(Play* play, Video* video) {

if (play->video.decoder.streamIndex == -1)

//没有视频流

{

return ERRORCODE_VIDEO_MISSINGSTREAM;

}

if (play->audio.decoder.streamIndex != -1 && video->sofDisplay && !play->audio.sofPlay)

return 0;

AVFrame* frame = NULL;

if (av_fifo_size(video->decoder.fifoFrame) > 0)

{

av_fifo_generic_peek(video->decoder.fifoFrame, &frame, sizeof(AVFrame*), NULL);

//---------------时钟同步--------------

AVRational timebase = play->formatContext->streams[video->decoder.streamIndex]->time_base;

//计算视频帧的pts

double pts = frame->pts * (double)timebase.num / timebase.den;

//视频帧的持续时间

double duration = frame->pkt_duration * (double)timebase.num / timebase.den;

double delay = synchronize_updateVideo(&play->synchronize, pts, duration);

if (delay > 0)

//延时

{

play->wakeupTime = getCurrentTime() + delay;

return 0;

}

else if (delay < 0)

//丢帧

{

av_fifo_generic_read(video->decoder.fifoFrame, &frame, sizeof(AVFrame*), NULL);

av_frame_unref(frame);

av_frame_free(&frame);

return 0;

}

else

//播放

{

av_fifo_generic_read(video->decoder.fifoFrame, &frame, sizeof(AVFrame*), NULL);

}

//---------------时钟同步-------------- end

}

else if (video->decoder.eofFrame)

{

video->sofDisplay = 1;

//标记结束

video->eofDisplay = 1;

}

if (frame)

{

//渲染

video_present(play, video, frame);

av_frame_unref(frame);

av_frame_free(&frame);

if (!video->sofDisplay)

video->sofDisplay = 1;

if (play->step)

{

play->step--;

}

}

return 0;

}

static int interrupt_cb(void* arg) {

Play* play = (Play*)arg;

return play->exitFlag;

}

//打开输入流

static int packet_open(Play* play) {

//打开输入流

play->formatContext = avformat_alloc_context();

play->formatContext->interrupt_callback.callback = interrupt_cb;

play->formatContext->interrupt_callback.opaque = play;

if (avformat_open_input(&play->formatContext, play->url, NULL, NULL) != 0) {

LOG_ERROR("Couldn't open input stream");

return ERRORCODE_PACKET_CANNOTOPENINPUTSTREAM;

}

//查找输入流信息

if (avformat_find_stream_info(play->formatContext, NULL) < 0) {

LOG_ERROR("Couldn't find stream information");

return ERRORCODE_PACKET_CANNOTFINDSTREAMINFO;

}

//ignore = 0;

play->video.decoder.streamIndex = -1;

//获取视频流

for (unsigned i = 0; i < play->formatContext->nb_streams; i++)

if (play->formatContext->streams[i]->codecpar->codec_type == AVMEDIA_TYPE_VIDEO) {

play->video.decoder.streamIndex = i;

break;

}

play->audio.decoder.streamIndex = -1;

//获取音频流

for (unsigned i = 0; i < play->formatContext->nb_streams; i++)

if (play->formatContext->streams[i]->codecpar->codec_type == AVMEDIA_TYPE_AUDIO) {

play->audio.decoder.streamIndex = i;

break;

}

//没有找到任何流

if (play->video.decoder.streamIndex == -1 && play->audio.decoder.streamIndex == -1) {

LOG_ERROR("Didn't find any stream.");

return ERRORCODE_PACKET_DIDNOTFINDDANYSTREAM;

}

play->eofPacket = 0;

return 0;

}

//异步读取包完成后的操作

static int packet_readAwait(void* arg)

{

Play* play = (Play*)arg;

if (play->eofPacket == 0)

{

if (play->packet.stream_index == play->video.decoder.streamIndex)

//写入视频包队

{

AVPacket* packet = av_packet_clone(&play->packet);

av_fifo_generic_write(play->video.decoder.fifoPacket, &packet, sizeof(AVPacket*), NULL);

}

else if (play->packet.stream_index == play->audio.decoder.streamIndex)

//写入音频包队

{

AVPacket* packet = av_packet_clone(&play->packet);

av_fifo_generic_write(play->编程audio.decoder.fifoPacket, &packet, sizeof(AVPacket*), NULL);

}

av_packet_unref(&play->packet);

}

else if (play->eofPacket == AVERROR_EOF)

{

play->eofPacket = 1;

//写入空包flush解码器中的缓存

AVPacket* packet = &play->packet;

if (play->audio.decoder.fifoPacket)

av_fifo_generic_write(play->audio.decoder.fifoPacket, &packet, sizeof(AVPacket*), NULL);

if (play->video.decoder.fifoPacket)

av_fifo_generic_write(play->video.decoder.fifoPacket, &packet, sizeof(AVPacket*), NULL);

}

else

{

LOG_ERROR("read packet erro!\n");

play->exitFlag = 1;

play->isAsyncReading = 0;

return ERRORCODE_PACKET_READFRAMEFAILED;

}

play->isAsyncReading = 0;

return 0;

}

//异步读取包

static int packet_readAsync(void* arg)

{

Play* play = (Play*)arg;

play->eofPacket = av_read_frame(play->formatContext, &play->packet);

//回到播放线程处理包

play_beginInvoke(play, packet_readAwait, play);

return 0;

}

//读取包

static int packet_read(Play* play) {

if (play->isAsyncReading)

return 0;

if (play->eofPacket)

{

return 0;

}

if (play->video.decoder.streamIndex != -1 && av_fifo_space(play->video.decoder.fifoPacket) < 1)

//视频包队列已满

{

return 0;

}

if (play->audio.decoder.streamIndex != -1 && av_fifo_space(play->audio.decoder.fifoPacket) < 1)

//音频包队列已满

{

return 0;

}

if (!_pool)

//初始化线程池

{

_pool = (ThreadPool*)av_mallocz(sizeof(ThreadPool));

threadPool_init(_pool, 32);

}

play->isAsyncReading = 1;

//异步读取包

threadPool_run(_pool, packet_readAsync, play);

return 0;

}

static void play_eventHandler(Play* play);

//等待读包结束

static void packet_waitAsyncReadFinished(Play* play) {

//确保异步操作结束

while (play->isAsyncReading)

{

av_usleep(0.01 * 1000000);

play_eventHandler(play);

}

}

//关闭输入流

static void packet_close(Play* play) {

packet_waitAsyncReadFinished(play);

if (play->packet.data)

{

av_packet_unref(&play->packet);

}

if (play->formatContext)

{

avformat_close_input(&play->formatContext);

}

}

//定位

static int packet_seek(Play* play, double time) {

packet_waitAsyncReadFinished(play);

return avformat_seek_file(play->formatContext, -1, INT64_MIN, time * AV_TIME_BASE, INT64_MAX, 0) >= 0;

}

//设置窗口大小

void play_setWindowsize(Play* play, int width, int height) {

play->video.screen_w = width;

play->video.screen_h = height;

}

//定位

void play_seek(Play* play, double time) {

if (time < 0)

{

time = 0;

}

if (packet_seek(play, time))

{

//重置属性

play->audio.accumulateSamples = 0;

play->audio.sofPlay = 0;

play->video.sofDisplay = 0;

//清除缓存

decoder_clear(play, &play->video.decoder);

decoder_clear(play, &play->audio.decoder);

avformat_flush(play->formatContext);

if (play->audio.playFifo)

{

SDL_LockMutex(play->audio.mutex);

av_audio_fifo_reset(play->audio.playFifo);

synchronize_reset(&play->synchronize);

SDL_UnlockMutex(play->audio.mutex);

}

else

{

synchronize_reset(&play->synchronize);

}

//暂停时需要播放一帧

play->step = 1;

}

}

//暂停

void play_pause(Play* play, int isPaused) {

if (play->isPaused == isPaused)

return;

if (!isPaused)

{

play->audio.sofPlay = 0;

play->video.sofDisplay = 0;

synchronize_reset(&play->synchronize);

}

play->isPaused = isPaused;

}

//静音

void play_setVolume(Play* play, int value) {

//移动到0作为最大音量。则初始化memset后不需要设置默认音量。

if (value < 0)

value = 0;

value -= SDL_MIX_MAXVOLUME;

if (play->audio.volume == value)

return;

play->audio.volume = value;

}

int play_getVolume(Play* play) {

return play->audio.volume + SDL_MIX_MAXVOLUME;

}

//事件处理

static void play_eventHandler(Play* play) {

PlayMessage msg;

while (messageQueue_poll(&play->mq, &msg)) {

switch (msg.type)

{

case PLAYMESSAGETYPE_INVOKE:

SDL_ThreadFunction fn = (SDL_ThreadFunction)msg.param1;

fn(msg.param2);

break;

}

}

//处理窗口消息

SDL_Event sdl_event;

if (SDL_PollEvent(&sdl_event))

{

switch (sdl_event.type)

{

case SDL_WINDOWEVENT:

if (sdl_event.window.event == SDL_WINDOWEVENT_CLOSE)

play->exitFlag = 1;

break;

case SDL_KEYDOWN:

switch (sdl_event.key.keysym.sym) {

case SDLK_UP:

play_setVolume(play, play_getVolume(play) + 20);

break;

case SDLK_DOWN:

play_setVolume(play, play_getVolume(play) - 20);

break;

case SDLK_LEFT:

play_seek(play, synchronize_getMasterTime(&play->synchronize) - 10);

brpythoneak;

case SDLK_RIGHT:

play_seek(play, synchronize_getMasterTime(&play->synchronize) + 10);

break;

case SDLK_SPACE:

play_pause(play, !play->isPaused);

break;

default:

break;

}

break;

}

}

}

//播放循环

static int play_loop(Play* play)

{

int ret = 0;

double remainingTime = 0;

while (!play->exitFlag)

{

if (!play->isPaused || play->step)

{

//解复用

if ((ret = packet_read(play)) != 0)

{

LOG_ERROR("read packet error");

ret = ERRORCODE_PLAY_READPACKETERROR;

break;

}

//视频解码

if ((ret = decoder_decode(play, &play->video.decoder)) != 0)

{

LOG_ERROR("video decode error");

ret = ERRORCODE_PLAY_VIDEODECODEERROR;

break;

}

//音频解码

if ((ret = decoder_decode(play, &play->audio.decoder)) != 0)

{

LOG_ERROR("audio decode error");

ret = ERRORCODE_PLAY_AUDIODECODEERROR;

break;

}

//延时等待

remainingTime = (play->wakeupTime - getCurrentTime()) / 2;

if (remainingTime > 0)

{

av_usleep(remainingTime * 1000000);

}

//视频显示

if ((ret = video_display(play, &play->video)) != 0)

{

LOG_ERROR("video display error");

ret = ERRORCODE_PLAY_VIDEODISPLAYERROR;

break;

}

//音频播放

if ((ret = audio_play(play, &play->audio)) != 0)

{

LOG_ERROR("audio play error");

ret = ERRORCODE_PLAY_AUDIOPLAYERROR;

break;

开发者_自学开发 }

//检查结尾

if ((play->video.decoder.streamIndex == -1 || play->video.eofDisplay) && (play->audio.decoder.streamIndex == -1 || play->audio.eofPlay))

{

if (!play->isLoop)

break;

//循环播放,定位到起点

play->eofPacket = 0;

play->audio.decoder.eofFrame = 0;

play->video.decoder.eofFrame = 0;

play->audio.eofPlay = 0;

play->video.eofDisplay = 0;

play_seek(play, 0);

continue;

}

}

else

{

av_usleep(0.01 * 1000000);

}

//处理消息

play_eventHandler(play);

}

return ret;

}

/// <summary>

/// 播放

/// 单线程阻塞播放

/// </summary>

/// <param name="play">播放器对象</param>

/// <param name="url">输入流的地址,可以是本地路径、http、https、rtmp、rtsp等</param>

/// <returns>错误码,0表示无错误</returns>

int play_exec(Play* play, const char* url) {

int ret = 0;

play->url = (char*)url;

//打开输入流

if ((ret = packet_open(play)) != 0)

{

LOG_ERROR("open input error");

ret = ERRORCODE_PLAY_OPENINPUTSTREAMFAILED;

goto end;

}

//初始化视频模块

if (play->video.decoder.streamIndex != -1)

{

if ((ret = video_init(play, &play->video)) != 0)

{

LOG_ERROR("init video error");

ret = ERRORCODE_PLAY_VIDEOINITFAILED;

goto end;

}

}

//初始化音频模块

if (play->audio.decoder.streamIndex != -1)

{

if ((ret = audio_init(play, &play->audio)) != 0)

{

LOG_ERROR("init audio error");

ret = ERRORCODE_PLAY_AUDIOINITFAILED;

goto end;

}

}

else

{

play->synchronize.type = SYNCHRONIZETYPE_ABSOLUTE;

}

//初始化消息队列

if ((ret = messageQueue_init(&play->mq, 500, sizeof(PlayMessage))) != 0)

{

LOG_ERROR("open input error");

ret = ERRORCODE_PLAY_OPENINPUTSTREAMFAILED;

goto end;

}

//进入播放循环进行:解码-渲染-播放

if ((ret = play_loop(play)) != 0)

{

ret = ERRORCODE_PLAY_LOOPERROR;

}

end:

//销毁资源

if (play->video.decoder.streamIndex != -1)

{

video_deinit(play, &play->video);

}

if (play->audio.decoder.streamIndex != -1)

{

audio_deinit(play, &play->audio);

}

packet_close(play);

synchronize_reset(&play->synchronize);

messageQueue_deinit(&play->mq);

play->url = NULL;

play->exitFlag = 0;

return ret;

}

/// <summary>

/// 退出播放

/// </summary>

/// <param name="play">播放器对象</param>

void play_exit(Play* play)

{

play->exitFlag = 1;

}

#undef main

int main(int argc, char** argv) {

Play play;

//memset相当于初始化

memset(&play, 0, sizeof(Play));

play_setWindowSize(&play, 640, 360);

play.isLoop = 1;

//单线程阻塞播放

return play_exec(&play, "D:\\test.mp4");

}

完整代码项目:vs2022、makefile,Windows、linux都可以运行,Linux需要自行配置ffmpeg和sdl

四、使用示例

#undef main

int main(int argc, char** argv) {

Play play;

//memset相当于初始化

memset(&play, 0, sizeof(Play));

play_setWindowSize(&play, 640, 360);

play.isLoop = 1;

//单线程阻塞播放。左快退、右快进、上下调音量、空格暂停。

return play_exec(&play, "D:\\test.mp4");

}

总结

以上就是今天要讲的内容,本文的播放器验证了单线程播放是可行的,尤其是播放本地文件可以做到完全单线程,那这样对于实现视频剪辑工具就有很大的帮助了,每多一条轨道只需要增加一个线程。而且采用异步读包之后也能正常播放网络流,2个线程播放视频依然比ffplay要优化。

到此这篇关于C语言使用ffmpeg实现单线程异步的视频播放器的文章就介绍到这了,更多相关C语言 ffmpeg视频播放器内容请搜索我们以前的文章或继续浏览下面的相关文章希望大家以后多多支持我们!

加载中,请稍侯......

加载中,请稍侯......

精彩评论