Python实现批量采集商品数据的示例详解

目录

- 本次目的

- 知识点

- 开发环境

- 代码

本次目的

python批量采集某商品数据

知识点

requests 发送请求

re 解析网页数据

json 类型数据提取

csv 表格数据保存

开发环境

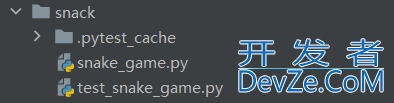

python 3.8

pycharm

requests

代码

导入模块

import json import random import time import csv import requests import re import pymysql

核心代码

# 连接数据库

def save_sql(title, pic_url, detail_url, view_price, item_loc, view_sales, nick):

count = pymysql.connect(

host='xxx.xxx.xxx.xxx', # 数据库地址

port=3306, # 数据库端口

user='xxxx', # 数据库账号

password='xxxx', # 数据库密码

db='xxxx' # 数据库表名

)

# 创建数据库对象

db = count.cursor()

# 写入sql

sql = f"insert into goods(title, pic_url, detail_url, view_price, item_loc, view_sales, nick) values ('{title}', '{pic_url}', '{detail_url}', {view_price}, '{item_loc}', '{view_sales}', '{nick}')"

# 执行sql

db.execute(sql)

# 保存修改内容

count.commit()

db.close()

headers = {

'cookie': 'miid=413786436http://www.cppcns.com1077413341; tracknick=%5Cu5218%5Cu6587%5Cu9F9978083283; thw=cn; hng=CN%7Czh-CN%7CCNY%7C156; cna=MNI4GicXYTQCAa8APqlAWWiS; enc=%2FWC5TlhZCGfEq7Zm4Y7wyNToESfZVxhucOmHkanuKyUkH1YNHBFXacrDRNdCFeeY9y5ztSufV535NI0AkjeX4g%3D%3D; t=ad15767ffa6febb4d2a8709edebf63d3; lgc=%5Cu5218%5Cu6587%5Cu9F9978083283; sgcookie=E100EcWpAN49d4Uc3MkldEc205AxRTa81RfV4IC8X8yOM08mjVtdhtulkYwYybKSRnCaLHGsk1mJ6lMa1TO3vTFmr7MTW3mHm92jAsN%2BOA528auARfj编程客栈f2rnOV%2Bx25dm%2BYC6l; uc3=nk2=ogczBg70hCZ6AbZiWjM%3D&vt3=F8dCvCogB1%2F5Sh1kqHY%3D&lg2=Vq8l%2BKCLz3%2F65A%3D%3D&id2=UNGWOjVj4Vjzwg%3D%3D; uc4=nk4=0%40oAWoex2a2MA2%2F2I%2FjFnivZpTtTp%2F2YKSTg%3D%3D&id4=0%40UgbuMZOge7ar3lxd0xayM%2BsqyxOW; _cc_=W5iHLLyFfA%3D%3D; _m_h5_tk=ac589fc01c86be5353b640607e791528_1647451667088; _m_h5_tk_enc=7d452e4e140345814d5748c3e31fc355; xlly_s=1; x5sec=7b227365617263686170703b32223a223264393234316334363365353038663531353163633366363036346635356431434c61583635454745506163324f2f6b2b2b4b6166686f4d4d7a45774e7a4d794d6a59324e4473784d4b6546677037382f2f2f2f2f77453d227d; JSESSIONID=1F7E942AC30122D1C7DBA22C429521B9; tfstk=cKKGBRTY1F71aDbHPcs6LYjFVa0dZV2F6iSeY3hEAYkCuZxFizaUz1sbK1hS_r1..; l=eBEVp-O4gnqzSzLbBOfwnurza77OIIRAguPzaNbMiOCPO75p5zbNW60wl4L9CnGVhsTMR3lRBzU9BeYBqo44n5U62j-la1Hmn; isg=BDw8SnVxcvXZcEU4ugf-vTadDdruNeBfG0WXdBa9WicK4dxrPkd97hHTxQmZqRi3',

'referer': 'https://s.taobao.com/search?q=%E4%B8%9D%E8%A2%9C&imgfile=&js=1&stats_click=search_radio_all%3A1&initiative_id=staobaoz_20220323&ie=utf8&bcoffset=1&ntoffset=1&p4ppushleft=2%2C48&s=',

'sec-ch-ua': '" Not A;Brand";v="99", "Chromium";v="99", "Google Chrome";v="99"',

'sec-ch-ua-mobile': '?0',

'sec-ch-ua-platform': '"Windows"',

'sec-fetch-dest': 'document',

'sec-fetch-mode': 'navigate',

'sec-fetch-site': 'same-origin',

'sec-fetch-user': '?1',

'upgrade-insecure-requests': '1',

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/99.0.4844.82 Safari/537.36',

}

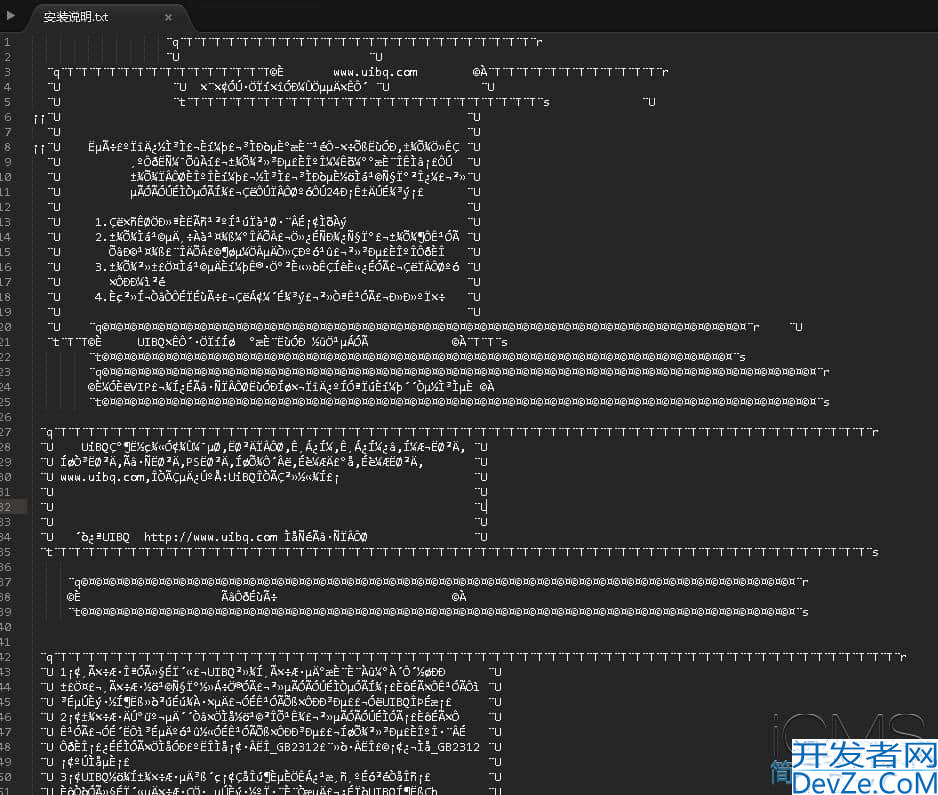

with open('淘宝.csv', mode='a', encoding='utf-8', newline='') as f:

csv_writer = csv.writer(f)

csv_writer.writerow(['title', 'pic_url', 'detail_url', 'view_price', 'item_loc', 'view_sales', 'nick'])

for page in range(1, 101):

url = f'https://s.taobao.com/search?q=%E4%B8%9D%E8%A2%9C&imgfile=&js=1&stats_click编程客栈=search_radio_all%3A1&initiative_id=staobaoz_20220323&ie=utf8&bcoffset=1&ntoffset=1&p4ppushleft=2%2C48&s={44*page}'

response = requests.get(url=url, headers=headers)

json_str = re.findall('g_page_config = (.*);', response.text)[0]

json_data = json.loads(json_str)

auctions = json_data['mods']['itemlist'http://www.cppcns.com]['data']['auctions']

for auction in auctions:

try:

title = auction['raw_title']

pic_url = auction['pic_url']

detail_url = auction['detail_url']

view_price = auction['view_price']

item_loc = auction['item_loc']

view_sales = auction['view_sales']

nick = auction['nick']

print(title, pic_url, detail_url, view_price, item_loc, view_sales, nick)

save_sql(title, pic_url, detail_url, view_price, item_loc, view_sales, nick)

with open('淘宝.csv', mode='a', encoding='utf-8', newline='') as f:

csv_writer = csv.writer(f)

csv_writer.writerow([title, pic_url, detail_url, view_price, item_loc, view_sales, nick])

except:

pass

time.sleep(random.randint(3, 5))

效果展示

到此这篇关于P编程客栈ython实现批量采集商品数据的示例详解的文章就介绍到这了,更多相关Python采集商品数据内容请搜索我们以前的文章或继续浏览下面的相关文章希望大家以后多多支持我们!

加载中,请稍侯......

加载中,请稍侯......

精彩评论