What are good CLI tools for JSON?

General Problem

Though I may be diagnosing the root cause of an event, determining how many users it affected, or distilling timing logs in order to assess the performance and throughput impact of a recent code change, my tools stay the same: grep, awk, sed, tr, uniq, sort, zcat, tail, head, join, and split. To glue them all together, Unix gives us pipes, and for fancier filtering we have xargs. If these fail me, there's always perl -e.

These tools are perfect for processing CSV files, tab-delimited files, log files with a predictable line format, or files with comma-separated key-value pairs. In other words, files where each line has next to no context.

XML Analogues

I recently needed to trawl through Gigabytes of XML to build a histogram of usage by user. This was easy enough with the tools I had, but for more complicated queries the normal approaches break down. Say I have files with items like this:

<foo user="me">

<baz key="zoidberg" value="squid" />

<baz key="leela" value="cyclops" />

<baz key="fry" value="rube" />

</foo>

And let's say I want to produce a mapping from user to average number of <baz>s per <foo>. Processing line-by-line is no longer an option: I need to know which user's <foo> I'm currently inspecting so I know whose average to update. Any sort of Unix one liner that accomplishes this task is likely to be inscrutable.

Fortunately in XML-land, we have wonderful technologies like XPath, XQuery, and XSLT to help us.

Previously, I had gotten accustomed to using the wonderful XML::XPath Perl module to accomplish queries like the one above, but after finding a TextMate Plugin that could run an XPath expression against my current window, I stopped writing one-off Perl scripts to query XML. And I just found out about XMLStarlet which is installing as I type this and which I look forward to using in the future.

JSON Solutions?

So this leads me to my question: are there any tools like this for JSON? It's only a matter of time before some investigation task requires me to do similar queries on JSON files, and without tools like XPath and XSLT, such a task will be a lot harder. If I had a bunch of JSON that looked like this:

{

"firstName": "Bender",

"lastName": "Robot",

"age": 200,

"address": {

"streetAddress": "123",

"city": "New York",

"state": "NY",

"postalCode": "1729"

},

"phoneNumber": [

{ "type": "home", "number": "666 555-1234" },

{ "type": "fax", "number": "666 555-4567" }

]

}

And wanted to find the average number of phone numbers each person had, I could do something like this with XPath:

fn:avg(/fn:count(phoneNumber))

Questions

- Are there any command-line tools that can "query" JSON files in this way?

- If you have to process a bunch of JSON files on a Unix command line, what tools do you use?

- Heck, is there even work being done to make a query language like this for JSON?

- If you do use tools like this in your day-to-day work, what do you like/dislike about them? Are there any gotchas?

I'm 开发者_JAVA技巧noticing more and more data serialization is being done using JSON, so processing tools like this will be crucial when analyzing large data dumps in the future. Language libraries for JSON are very strong and it's easy enough to write scripts to do this sort of processing, but to really let people play around with the data shell tools are needed.

Related Questions

- Grep and Sed Equivalent for XML Command Line Processing

- Is there a query language for JSON?

- JSONPath or other XPath like utility for JSON/Javascript; or Jquery JSON

I just found this:

http://stedolan.github.io/jq/

"jq is a lightweight and flexible command-line JSON processor."

2014 update:

@user456584 mentioned:

There's also the 'json' command (e.g. 'jsontool'). I tend to prefer it over jq. Very UNIX-y. Here's a link to the project: github.com/trentm/json –

in the json README at http://github.com/trentm/json there is a long list of similar things

- jq: http://stedolan.github.io/jq/

- json:select: http://jsonselect.org/

- jsonpipe: https://github.com/dvxhouse/jsonpipe

- json-command: https://github.com/zpoley/json-command

- JSONPath: http://goessner.net/articles/JsonPath/, http://code.google.com/p/jsonpath/wiki/Javascript

- jsawk: https://github.com/micha/jsawk

- jshon: http://kmkeen.com/jshon/

- json2: https://github.com/vi/json2

- fx: https://github.com/antonmedv/fx

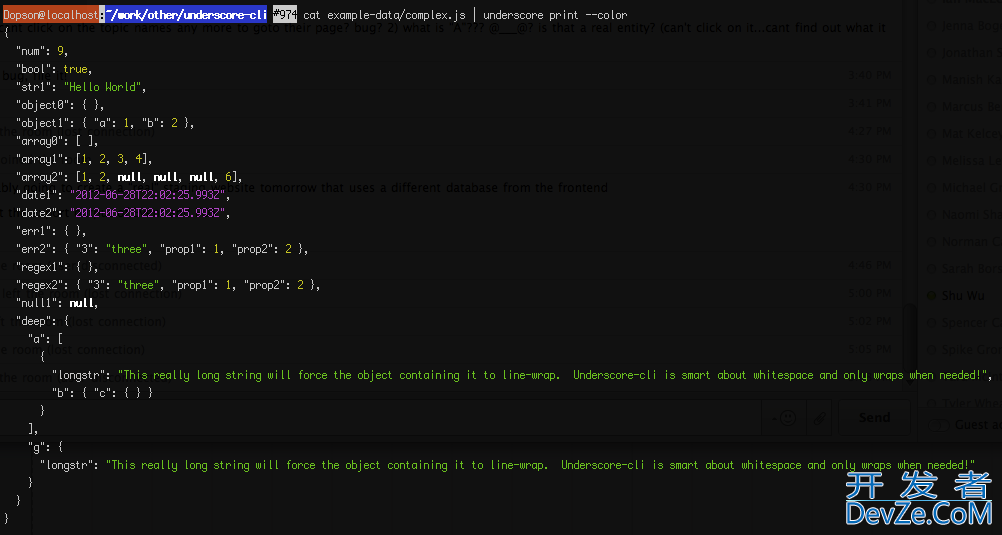

I have created a module specifically designed for command-line JSON manipulation:

https://github.com/ddopson/underscore-cli

- FLEXIBLE - THE "swiss-army-knife" tool for processing JSON data - can be used as a simple pretty-printer, or as a full-powered Javascript command-line

- POWERFUL - Exposes the full power and functionality of underscore.js (plus underscore.string)

- SIMPLE - Makes it simple to write JS one-liners similar to using "perl -pe"

- CHAINED - Multiple command invokations can be chained together to create a data processing pipeline

- MULTI-FORMAT - Rich support for input / output formats - pretty-printing, strict JSON, etc [coming soon]

- DOCUMENTED - Excellent command-line documentation with multiple examples for every command

It allows you to do powerful things really easily:

cat earthporn.json | underscore select '.data .title'

# [ 'Fjaðrárgljúfur canyon, Iceland [OC] [683x1024]',

# 'New town, Edinburgh, Scotland [4320 x 3240]',

# 'Sunrise in Bryce Canyon, UT [1120x700] [OC]',

# ...

# 'Kariega Game Reserve, South Africa [3584x2688]',

# 'Valle de la Luna, Chile [OS] [1024x683]',

# 'Frosted trees after a snowstorm in Laax, Switzerland [OC] [1072x712]' ]

cat earthporn.json | underscore select '.data .title' | underscore count

# 25

underscore map --data '[1, 2, 3, 4]' 'value+1'

# prints: [ 2, 3, 4, 5 ]

underscore map --data '{"a": [1, 4], "b": [2, 8]}' '_.max(value)'

# [ 4, 8 ]

echo '{"foo":1, "bar":2}' | underscore map -q 'console.log("key = ", key)'

# key = foo

# key = bar

underscore pluck --data "[{name : 'moe', age : 40}, {name : 'larry', age : 50}, {name : 'curly', age : 60}]" name

# [ 'moe', 'larry', 'curly' ]

underscore keys --data '{name : "larry", age : 50}'

# [ 'name', 'age' ]

underscore reduce --data '[1, 2, 3, 4]' 'total+value'

# 10

It has a very nice command-line help system and is extremely flexible. It is well tested and ready for use; however, I'm still building out a few of the features like alternatives for input/output format, and merging in my template handling tool (see TODO.md). If you have any feature requests, comment on this post or add an issue in github. I've designed out a pretty extensive feature-set, but I'd be glad to prioritize features that are needed by members of the community.

One way you could do is to convert it to XML. Following uses two perl modules (JSON and XML::Simple) to do fly-by conversion:

cat test.json | perl -MJSON -MXML::Simple -e 'print XMLout(decode_json(do{local$/;<>}),RootName=>"json")'

which for your example json ends up as:

<json age="200" firstName="Bender" lastName="Robot">

<address city="New York" postalCode="1729" state="NY" streetAddress="123" />

<phoneNumber number="666 555-1234" type="home" />

<phoneNumber number="666 555-4567" type="fax" />

</json>

Have a look at this insane project jsawk. It is design to filter through JSON input from the command line. Check resty as well for a command line REST client that you can use in pipelines that may come in handy.

Recently I discovered that JSON can easily be eval-ed with Python:

$ python -c "json=eval(open('/json.txt').read()); print len(json['phoneNumber'])"

2

Though the method will obviously fail if the JSON input contains nulls.

There is also interactive terminal tool — fx

Pipe into fx any JSON and anonymous function for reducing it.

$ echo '{...}' | fx [code ...]

Start interactive mode without passing any arguments:

$ curl ... | fx

- https://github.com/antonmedv/fx

Have a look at the f:json-document() from the FXSL 2.x library.

Using this function it is extremely easy to incorporate JSon and use it just as... XML.

For example, one can just write the following XPath expression:

f:json-document($vstrParam)/Students/*[sex = 'Female']

and get all children of Students with sex = 'Female'

Here is the complete example:

<xsl:stylesheet version="2.0"

xmlns:xsl="http://www.w3.org/1999/XSL/Transform"

xmlns:xs="http://www.w3.org/2001/XMLSchema"

xmlns:f="http://fxsl.sf.net/"

exclude-result-prefixes="f xs"

>

<xsl:import href="../f/func-json-document.xsl"/>

<xsl:output omit-xml-declaration="yes" indent="yes"/>

<xsl:variable name="vstrParam" as="xs:string">

{

"teacher":{

"name":

"Mr Borat",

"age":

"35",

"Nationality":

"Kazakhstan"

},

"Class":{

"Semester":

"Summer",

"Room":

null,

"Subject":

"Politics",

"Notes":

"We're happy, you happy?"

},

"Students":

{

"Smith":

{"First Name":"Mary","sex":"Female"},

"Brown":

{"First Name":"John","sex":"Male"},

"Jackson":

{"First Name":"Jackie","sex":"Female"}

}

,

"Grades":

{

"Test":

[

{"grade":"A","points":68,"grade":"B","points":25,"grade":"C","points":15},

{"grade":"C","points":2, "grade":"B","points":29, "grade":"A","points":55},

{"grade":"C","points":2, "grade":"A","points":72, "grade":"A","points":65}

]

}

}

</xsl:variable>

<xsl:template match="/">

<xsl:sequence select=

"f:json-document($vstrParam)/Students/*[sex = 'Female']"/>

</xsl:template>

</xsl:stylesheet>

When the above transformation is applied on any XML document (ignored), the correct result is produced:

<Smith>

<First_Name>Mary</First_Name>

<sex>Female</sex>

</Smith>

<Jackson>

<First_Name>Jackie</First_Name>

<sex>Female</sex>

</Jackson>

Fortunately in XML-land, we have wonderful technologies like XPath, XQuery, and XSLT to help us.

[...]

So this leads me to my question: are there any tools like this for JSON?

If you ask me, xidel is exactly what you're looking for.

Xidel is a command line tool to download and extract data from HTML/XML pages or JSON-APIs, using CSS, XPath 3.0, XQuery 3.0, JSONiq or pattern templates. It can also create new or transformed XML/HTML/JSON documents.

$ xidel -s "input.json" -e '

$json/avg(

count((phoneNumber)())

)

'

2

加载中,请稍侯......

加载中,请稍侯......

精彩评论